In this section, I'll review the notation for sums and products.

Addition and multiplication are binary operations: They operate on two numbers at a time. If you want to add or multiply more than two numbers, you need to group the numbers so that you're only adding or multiplying two at once.

Addition and multiplication are associative, so it should not matter how I group the numbers when I add or multiply. Now associativity holds for three numbers at a time; for instance, for addition,

![]()

But why does this work for (say) two ways of grouping a sum of 100 numbers?

It turns out that, using induction, it's

possible to prove that any two ways of grouping a sum or product of n

numbers, for ![]() , give the same result.

, give the same result.

Taking this for granted, I can define the sum of a list of numbers

![]() recursively as follows.

recursively as follows.

A sum of a single number is just the number:

![]()

I know how to add two numbers:

![]()

To add n numbers for ![]() , I add the first

, I add the first ![]() of them (which I assume I already know how to do),

then add the

of them (which I assume I already know how to do),

then add the ![]() :

:

![]()

Note that this is a particular way of grouping the n summands: Make

one group with the first ![]() , and add it to the last term.

By associativity (as noted above), this way of grouping the sum

should give the same result as any other way.

, and add it to the last term.

By associativity (as noted above), this way of grouping the sum

should give the same result as any other way.

Therefore, I might as well omit parentheses and write

![]()

The product of a list of numbers ![]() is defined recursively in a similar way.

is defined recursively in a similar way.

A product of a single number is just the number:

![]()

I know how to multiply two numbers:

![]()

To multiply n numbers for ![]() , I multiply the first

, I multiply the first ![]() of them (which I assume I already know how to do),

then multiply the

of them (which I assume I already know how to do),

then multiply the ![]() :

:

![]()

Again, I can drop the parentheses and write

![]()

Remark. Some people define the sum and product of no numbers as follows: The sum of no numbers (sometimes called the empty sum is defined to be 0, and the product of no numbers (sometimes called the empty product) is defined to be 1. These definitions are useful when you're taking the sum or product of numbers in a set, rather than the sum or product of numbers indexed by a range of consecutive integers.

For example, suppose ![]() . Then to use

summation notation to write the sum of the squares of the elements of

S, you might write

. Then to use

summation notation to write the sum of the squares of the elements of

S, you might write

![]()

Similarly, to write the product of the reciprocals of the numbers in S, you might write

![]()

If the set containing the elements of the sum or product varies in a discussion, it might happen that the containing set is empty. Using the definitions for an empty sum or an empty product allows for this case. For instance,

![]()

That is, the sum of the squares of the elements of the empty set is

0.![]()

The following properties and those for products which are given below are fairly obvious, but careful proofs require induction.

Proposition. ( Properties of sums)

(a) ![]() .

.

(b) ![]() .

.

(c) ![]() .

.![]()

Proof. I'll prove (c) as an example. I'll use

induction. For ![]() , the left side is

, the left side is ![]() and the right side is

and the right side is ![]() . The result holds for

. The result holds for ![]() .

.

Assume that the result holds for n:

![]()

Then for ![]() , I have

, I have

The first equality used the recursive definition of a sum. The second equality follows from the induction hypothesis. The final equality is just basic algebra.

Properties (a) and (b) can also be proved using induction.![]()

Example. Compute the following sums:

(a) ![]() .

.

(b) ![]() .

.

(c) ![]() .

.

(d) ![]() , where c is a

constant.

, where c is a

constant.

(a)

![]()

(b)

![]()

Note that sums need not start indexing at 1.![]()

(c)

![]()

Note that the summation variable can be anything that you want.![]()

(d)

![]()

Example. Compute the exact value of ![]() .

.

Note that

![]()

So

![]()

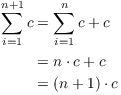

I'll write out a few of the terms:

Most of the terms cancel in pairs, with the negative term in one expression cancelling with the positive term in the next expression. Only the first term and the last term are left, and they give the value of the sum:

![]()

This is an example of a telescoping sum.![]()

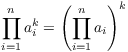

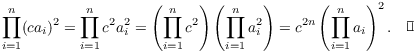

Proposition. ( Properties of products)

(a) ![]() .

.

(b)  .

.

(c) ![]() .

.![]()

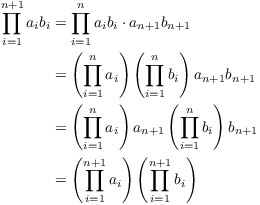

Proof. I'll prove (a) by way of example. The proof will use induction on n.

For ![]() , the left side is

, the left side is ![]() . The right side

is

. The right side

is ![]() . The result holds for

. The result holds for

![]() .

.

Assume that the result holds for n:

![]()

Then for ![]() , I have

, I have

The first equality follows from the recursive definition of a product. The second equality uses the induction hypothesis. The third equality comes from commutativity of multiplication. And the fourth equality is a result of the recursive definition of a product again.

Properties (b) and (c) can also be proved by induction.![]()

Example. Compute the following products:

(a) ![]() .

.

(b) ![]() , where c is a

constant.

, where c is a

constant.

(c) ![]() .

.

(a)

![]()

(b)

(c)

![]()

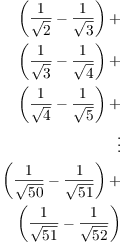

Example. Compute the exact value of ![]() .

.

Note that

![]()

So

![]()

I'll write out a few of the terms:

![]()

Most of the terms in the numerators cancel with the terms in the denominators "two fractions ahead". Only the first two denominators and the last two numerators don't cancel, and they give the value of the product:

![]()

This is an example of a telescoping

product.![]()

If n is a positive integer, ![]() (read

n-factorial) is the product of the integers from 1 to n:

(read

n-factorial) is the product of the integers from 1 to n:

![]()

For example,

![]()

By convention, ![]() is defined to be 1. This definition agrees

with the extension of factorials using the Gamma function, described

below. It also is the appropriate definition when factorials come up

in binomial coefficients.

is defined to be 1. This definition agrees

with the extension of factorials using the Gamma function, described

below. It also is the appropriate definition when factorials come up

in binomial coefficients.

Note that

![]()

There is a way of extending the definition of factorials to positive

real numbers (so that, for instance, ![]() is defined). The Gamma

function is defined by

is defined). The Gamma

function is defined by

![]()

(Note that x here is a real number.) If n is a positive integer,

![]()

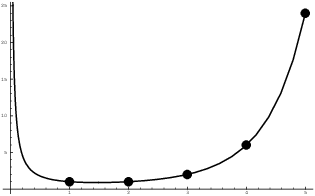

Here's a graph of the Gamma function:

I've placed dots at the points ![]() so you can

see that the Gamma function really goes through the factorial points.

Note also that

so you can

see that the Gamma function really goes through the factorial points.

Note also that ![]() . But if I plug

. But if I plug ![]() into the formula above, I get

into the formula above, I get ![]() , i.e.

, i.e. ![]() . Thus,

. Thus,

![]() , which is the convention I mentioned earlier.

, which is the convention I mentioned earlier.

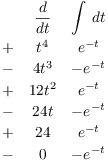

Example. Evaluate ![]() using the integral definition.

using the integral definition.

![]()

Do the antiderrivative by parts:

![]()

So the definite integral is

![]()

All the b-terms go to 0, because

![]()

Note that ![]() , so

, so ![]() .

.

Copyright 2019 by Bruce Ikenaga