If R is a ring, the ring of polynomials in x with

coefficients in R is denoted ![]() . It consists of all

formal sums

. It consists of all

formal sums

![]()

Here ![]() for all but finitely many values of i.

for all but finitely many values of i.

If the idea of "formal sums" worries you, replace a formal sum with the infinite vector whose components are the coefficients of the sum:

![]()

All of the operations which I'll define using formal sums can be defined using vectors. But it's traditional to represent polynomials as formal sums, so this is what I'll do.

A nonzero polynomial ![]() has degree n if

has degree n if ![]() and

and ![]() , and n is the largest integer

with this property. The zero polynomial is defined by convention to

have degree

, and n is the largest integer

with this property. The zero polynomial is defined by convention to

have degree ![]() . (This is necessary in order to make the

degree formulas work out.) Alternatively, you can say that the degree

of the zero polynomial is undefined; in that case, you will need to

make minor changes to some of the results below.

. (This is necessary in order to make the

degree formulas work out.) Alternatively, you can say that the degree

of the zero polynomial is undefined; in that case, you will need to

make minor changes to some of the results below.

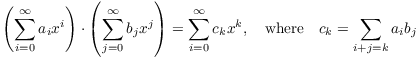

Polynomials are added componentwise, and multiplied using the "convolution" formula:

![]()

These formulas say that you compute sums and products as usual.

Example. ( Polynomial arithmetic) (a) Compute

![]()

(b) Compute

![]()

(a)

![]()

![]()

(b)

![]()

![]()

Let R be an integral domain. Then If ![]() , write

, write ![]() to denote the degree of f.

It's easy to show that the degree function satisfies the following

properties:

to denote the degree of f.

It's easy to show that the degree function satisfies the following

properties:

![]()

![]()

The verifications amount to writing out the formal sums, with a

little attention paid to the case of the zero polynomial. These

formulas do work if either f or g is equal to the zero

polynomial, provided that ![]() is understood to

behave in the obvious ways (e.g.

is understood to

behave in the obvious ways (e.g. ![]() for

any

for

any ![]() ).

).

Example. ( Degrees of

polynomials) (a) Give examples of polynomials ![]() such that

such that ![]()

(b) Give examples of polynomials ![]() such that

such that ![]() .

.

(a)

![]()

This shows that equality might not hold in ![]() .

.![]()

(b)

![]()

Proposition. Let F be a field, and let ![]() be the polynomial ring in one variable over F. The

units in

be the polynomial ring in one variable over F. The

units in ![]() are exactly the nonzero elements of F.

are exactly the nonzero elements of F.

Proof. It's clear that the nonzero elements of

F are invertible in ![]() , since they're already invertible

in F. Conversely, suppose that

, since they're already invertible

in F. Conversely, suppose that ![]() is

invertible, so

is

invertible, so ![]() for some

for some ![]() . Then

. Then ![]() , which

is impossible unless f and g both have degree 0. In particular, f is

a nonzero constant, i.e. an element of F.

, which

is impossible unless f and g both have degree 0. In particular, f is

a nonzero constant, i.e. an element of F.![]()

Theorem. ( Division

Algorithm) Let F be a field, and let ![]() . Suppose that

. Suppose that ![]() . There are unique

polynomials

. There are unique

polynomials ![]() such that

such that

![]()

Proof. The idea is to imitate the proof of the

Division Algorithm for ![]() .

.

Let

![]()

The set ![]() is a subset of the

nonnegative integers, and therefore must contain a smallest element

by well-ordering. Let

is a subset of the

nonnegative integers, and therefore must contain a smallest element

by well-ordering. Let ![]() be an element in S of

smallest degree, and write

be an element in S of

smallest degree, and write

![]()

I need to show that ![]() .

.

If ![]() , then since

, then since ![]() , I have

, I have ![]() .

.

Suppose then that ![]() . Assume toward a

contradiction that

. Assume toward a

contradiction that ![]() . Write

. Write

![]()

![]()

Assume ![]() , and

, and ![]() .

.

Consider the polynomial

![]()

Its degree is less than n, since the n-th degree terms cancel out.

However,

![]()

The latter is an element of S.

I've found an element of S of smaller degree than ![]() , which is a contradiction. It follows that

, which is a contradiction. It follows that ![]() .

.

Finally, to prove uniqueness, suppose

![]()

Rearranging the equation, I get

![]()

Then

![]()

But ![]() . The equation can

only hold if

. The equation can

only hold if

![]()

This means

![]()

Hence, ![]() and

and ![]() .

.![]()

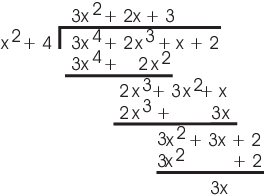

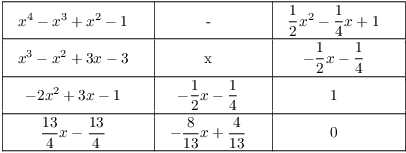

Example. ( Polynomial

division) Divide ![]() by

by ![]() in

in ![]() .

.

Remember as you follow the division that ![]() ,

, ![]() , and

, and ![]() --- I'm doing arithmetic mod 5.

--- I'm doing arithmetic mod 5.

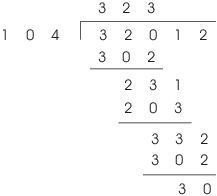

If you prefer, you can do long division without writing the powers of x --- i.e. just writing down the coefficients. Here's how it looks:

Either way, the quotient is ![]() and the remainder

is

and the remainder

is ![]() :

:

![]()

Definition. Let R be a commutative ring and

let ![]() . An element

. An element ![]() is a root of

is a root of ![]() if

if ![]() .

.

Note that polynomials are actually formal sums, not functions. However, it is obvious how to plug a number into a polynomial. Specifically, let

![]()

For ![]() , define

, define

![]()

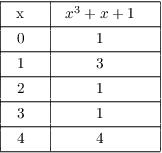

Observe that a polynomial can be nonzero as a

polynomial even if it equals 0 for every input! For example,

take ![]() is a nonzero

polynomial. However, plugging in the two elements of the coefficient

ring

is a nonzero

polynomial. However, plugging in the two elements of the coefficient

ring ![]() gives

gives

![]()

Theorem. Let F be a field, and let ![]() , where

, where ![]() .

.

(a) ( The Root Theorem) c is a root of ![]() in F if and only if

in F if and only if ![]() .

.

(b) ![]() has at most n roots in F.

has at most n roots in F.

Proof. (a) Suppose ![]() . Write

. Write

![]()

Then ![]() or

or ![]() .

.

In the first case, r is a nonzero constant. However, this implies that

![]()

This contradiction shows that ![]() , and

, and ![]() .

.

Conversely, if ![]() is a factor of

is a factor of ![]() , then

, then ![]() for some

for some ![]() . Hence,

. Hence,

![]()

Hence, c is a root of f.

(b) If ![]() are the distinct roots of f in F,

then

are the distinct roots of f in F,

then

![]()

Taking degrees on both sides gives ![]() .

.![]()

Example. ( Applying the Root

Theorem) In ![]() , show:

, show:

(a) ![]() is a factor of

is a factor of ![]() .

.

(b) ![]() is a factor of

is a factor of ![]() for any

for any ![]() .

.

(a) If ![]() , then

, then ![]() . Hence, 1 is a root of

. Hence, 1 is a root of ![]() , and by the Root Theorem

, and by the Root Theorem ![]() is a factor of

is a factor of ![]() .

.![]()

(b) If ![]() , then

, then ![]() . Hence, 1 is a root of

. Hence, 1 is a root of ![]() , so

, so ![]() is a factor of

is a factor of ![]() by the Root Theorem.

by the Root Theorem.![]()

Example. ( Applying the Root

Theorem) Prove that ![]() is

divisible by

is

divisible by ![]() in

in ![]() .

.

Plugging in ![]() into

into ![]() gives

gives

![]()

Since ![]() is a root,

is a root, ![]() is a factor by the Root Theorem.

is a factor by the Root Theorem.![]()

Remark. If the ground ring isn't a field, it's

possible for a polynomial to have more roots than its degree. For

example, the quadratic polynomial ![]() has roots

has roots ![]() ,

, ![]() ,

, ![]() ,

, ![]() . The previous result does not apply, because

. The previous result does not apply, because ![]() is not a field.

is not a field.

Corollary. ( The Remainder

Theorem) Let F be a field, ![]() , and let

, and let ![]() . When

. When ![]() is divided by

is divided by ![]() , the remainder is

, the remainder is ![]() .

.

Proof. Divide ![]() by

by ![]() :

:

![]()

Since ![]() , it follows that

, it follows that ![]() is a constant. But

is a constant. But

![]()

Therefore, the constant value of ![]() is

is ![]() .

.![]()

Example. ( Applying the

Remainder Theorem) Suppose ![]() leaves a

remainder of 5 when divided by

leaves a

remainder of 5 when divided by ![]() and a remainder of

-1 when divided by

and a remainder of

-1 when divided by ![]() . What is the remainder when

. What is the remainder when ![]() is divided by

is divided by ![]() ?

?

By the Remainder Theorem,

![]()

Now divide ![]() by

by ![]() . The

remainder

. The

remainder ![]() has degree less than

has degree less than ![]() , so

, so ![]() for some

for some ![]() :

:

![]()

Then

![]()

Solving the two equations for a and b, I get ![]() and

and ![]() . Thus, the remainder is

. Thus, the remainder is ![]() .

.![]()

Definition. Let R be an integral domain.

(a) If ![]() , then x divides y

if

, then x divides y

if ![]() for some

for some ![]() . Write

. Write ![]() to mean that x divides y.

to mean that x divides y.

(b) x and y are associates if ![]() , where u is a unit.

, where u is a unit.

(Recall that a unit in a ring is an element with a multiplicative inverse.)

(c) An element ![]() is

irreducible if

is

irreducible if ![]() , x is not a unit, and if

, x is not a unit, and if ![]() implies either y is a unit or z is a unit.

implies either y is a unit or z is a unit.

(d) An element ![]() is prime if

is prime if

![]() , x is not a unit, and

, x is not a unit, and ![]() implies

implies ![]() or

or ![]() .

.

Proposition. A nonzero nonconstant polynomial

![]() is irreducible if and only if

is irreducible if and only if ![]() implies that either g or h is a constant.

implies that either g or h is a constant.

Proof. Suppose ![]() is irreducible and

is irreducible and ![]() . Then

one of

. Then

one of ![]() ,

, ![]() is a unit. But we

showed earlier that the units in

is a unit. But we

showed earlier that the units in ![]() are the constant

polynomials.

are the constant

polynomials.

Suppose that ![]() is a nonzero nonconstant polynomial, and

is a nonzero nonconstant polynomial, and

![]() implies that either g or h is a constant.

implies that either g or h is a constant.

Since f is nonconstant, it's not a unit. Note that if ![]() , then

, then ![]() , since

, since ![]() .

.

Therefore, the condition that ![]() implies

that either g or h is a constant means that

implies

that either g or h is a constant means that ![]() implies that either

implies that either ![]() or

or ![]() is a unit --- again, since the

nonzero constant polynomials are the units in

is a unit --- again, since the

nonzero constant polynomials are the units in ![]() . This is what it means for f to be irreducible.

. This is what it means for f to be irreducible.![]()

Example. Show that ![]() is irreducible in

is irreducible in ![]() but not in

but not in ![]() .

.

![]() has no real roots, so by the Root Theorem it has no

linear factors. Hence, it's irreducibile in

has no real roots, so by the Root Theorem it has no

linear factors. Hence, it's irreducibile in ![]() .

.

However, ![]() in

in ![]() .

.![]()

Corollary. Let F be a field. A polynomial of

degree 2 or 3 in ![]() is irreducible if and only if it

has no roots in F.

is irreducible if and only if it

has no roots in F.

Proof. Suppose ![]() has degree 2 or 3.

has degree 2 or 3.

If f is not irreducible, then ![]() , where

neither g nor h is constant. Now

, where

neither g nor h is constant. Now ![]() and

and ![]() , and

, and

![]()

This is only possible if at least one of g or h has degree 1. This

means that at least one of g or h is a linear factor ![]() , and must therefore have a root in F. Since

, and must therefore have a root in F. Since ![]() , it follows that f has a root in F as

well.

, it follows that f has a root in F as

well.

Conversely, if f has a root c in F, then ![]() is a factor of f by the Root Theorem. Since f has

degree 2 or 3,

is a factor of f by the Root Theorem. Since f has

degree 2 or 3, ![]() is a proper factor, and f is not

irreducible.

is a proper factor, and f is not

irreducible.![]()

Remark. The result is false for polynomials of

degree 4 or higher. For example, ![]() has no roots

in

has no roots

in ![]() , but it is not irreducible over

, but it is not irreducible over ![]() .

.

Example. ( Checking for

irreducibility of a quadratic or cubic) Show that ![]() is irreducible.

is irreducible.

Since this is a cubic polynomial, I only need to see whether it has any roots.

Since ![]() has no roots in

has no roots in ![]() , it's irreducible.

, it's irreducible.![]()

Proposition. In an integral domain, primes are irreducible.

Proof. Let x be prime. I must show x is

irreducible. Suppose ![]() . I must show either y or z is

a unit.

. I must show either y or z is

a unit.

![]() , so obviously

, so obviously ![]() . Thus,

. Thus, ![]() or

or ![]() . Without loss of generality,

suppose

. Without loss of generality,

suppose ![]() .

.

Write ![]() . Then

. Then ![]() , and

since

, and

since ![]() (primes are nonzero) and we're in a domain,

(primes are nonzero) and we're in a domain,

![]() . Therefore, z is a unit, and x is irreducible.

. Therefore, z is a unit, and x is irreducible.![]()

Definition. Let R be an integral domain, and

let ![]() .

. ![]() is a greatest common divisor of x and y if:

is a greatest common divisor of x and y if:

(a) ![]() and

and ![]() .

.

(b) If ![]() and

and ![]() , then

, then ![]() .

.

The definition says "a" greatest common divisor, rather than "the" greatest common divisor, because greatest common divisors are only unique up to multiplication by units.

The definition above is the right one if you're dealing with an arbitrary integral domain. However, if your ring is a polynomial ring, it's nice to single out a "special" greatest common divisor and call it the greatest common divisor.

Definition. A monic polynomial is a polynomial whose leading coefficient is 1.

For example, here are some monic polynomials over ![]() :

:

![]()

Definition. Let F be a field, let ![]() be the ring of polynomials with coefficients in F,

and let

be the ring of polynomials with coefficients in F,

and let ![]() , where f and g are not both zero.

The greatest common divisor of f and

g is the monic polynomial which is a greatest common divisor of f and

g (in the integral domain sense).

, where f and g are not both zero.

The greatest common divisor of f and

g is the monic polynomial which is a greatest common divisor of f and

g (in the integral domain sense).

Example. ( Polynomial

greatest common divisors) Find the greatest common divisor of

![]() and

and ![]() in

in ![]() .

.

![]() is a greatest common divisor of

is a greatest common divisor of ![]() and

and ![]() :

:

![]()

![]()

Notice that any nonzero constant multiple of ![]() is also a greatest common divisor of

is also a greatest common divisor of ![]() and

and ![]() (in the integral domain

sense): For example,

(in the integral domain

sense): For example, ![]() works. This makes sense, because the units in

works. This makes sense, because the units in ![]() are the nonzero elements of

are the nonzero elements of ![]() . But by convention, I'll refer to

. But by convention, I'll refer to ![]() --- the monic greatest common divisor --- as

the greatest common divisor of

--- the monic greatest common divisor --- as

the greatest common divisor of ![]() and

and ![]() .

.![]()

The preceding definition assumes there is a greatest common

divisor for two polynomials in ![]() . In fact, the

greatest common divisor of two polynomials exists --- provided that

both polynomials aren't 0 --- and the proof is essentially the same

as the proof for greatest common divisors of integers.

. In fact, the

greatest common divisor of two polynomials exists --- provided that

both polynomials aren't 0 --- and the proof is essentially the same

as the proof for greatest common divisors of integers.

In both cases, the idea is to use the Division Algorithm repeatedly until you obtain a remainder of 0. This must happen in the polynomial case, because the Division Algorithm for polynomials specifies that the remainder has strictly smaller degree than the divisor.

Just as in the case of the integers, each use of the Division Algorithm does not change the greatest common divisor. So the last pair has the same greatest common divisor as the first pair --- but the last pair consists of 0 and the last nonzero remainder, so the last nonzero remainder is the greatest common divisor.

This process is called the Euclidean algorithm, just as in the case of the integers.

Let h and ![]() be two greatest common divisors of f and g.

By definition,

be two greatest common divisors of f and g.

By definition, ![]() and

and ![]() . From this, it follows that h and

. From this, it follows that h and ![]() have the same degree, and are constant multiples of

one another. If h and

have the same degree, and are constant multiples of

one another. If h and ![]() are both monic --- i.e.

both have leading coefficient 1 --- this is only possible if they're

equal. So there is a unique monic greatest common divisor

for any two polynomials.

are both monic --- i.e.

both have leading coefficient 1 --- this is only possible if they're

equal. So there is a unique monic greatest common divisor

for any two polynomials.

Finally, the same proofs that I gave for the integers show that you can write the greatest common divisor of two polynomials as a linear combination of the two polynomials. You can use the Extended Euclidean Algorithm that you learned for integers to find a linear combination. To summarize:

Theorem. Let F be a field, ![]() , f and g not both 0.

, f and g not both 0.

(a) f and g have a unique (monic) greatest common divisor.

(b) There exist polynomials ![]() such that

such that

![]()

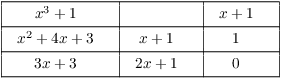

Example. ( Applying the

Extended Euclidean Algorithm) Find the greatest common divisor

of ![]() and

and ![]() in

in ![]() and express the

greatest common divisor as a linear combination of

and express the

greatest common divisor as a linear combination of ![]() and

and ![]() with coefficients in

with coefficients in ![]() .

.

The greatest common divisor is ![]() . The greatest common divisor is only determined up

to multiplying by a unit, so multiplying by

. The greatest common divisor is only determined up

to multiplying by a unit, so multiplying by ![]() gives the monic greatest common divisor

gives the monic greatest common divisor

![]() .

.

You can check that

![]()

Example. ( Applying the

Extended Euclidean Algorithm) Find the greatest common divisor

of ![]() and

and ![]() in

in ![]() and express the greatest common divisor as

a linear combination of

and express the greatest common divisor as

a linear combination of ![]() and

and ![]() with coefficients in

with coefficients in ![]() .

.

The greatest common divisor is ![]() , and

, and

![]()

The greatest common divisor is only determined up to multiplying by a unit. So, for example, I can multiply the last equation by 2 to get

![]()

Copyright 2018 by Bruce Ikenaga