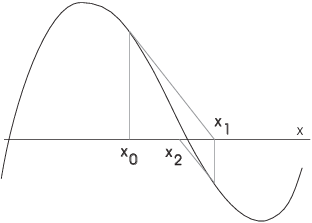

Newton's method is simple to describe

pictorially. To find a root of an equation ![]() , start at a

point

, start at a

point ![]() . Go up to the curve. From there, "slide

down" the tangent line till you hit the x-axis. That's

. Go up to the curve. From there, "slide

down" the tangent line till you hit the x-axis. That's ![]() .

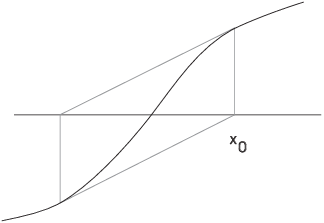

Now repeat the process. As you can see in the following picture, this

can work quite well in "nice" cases.

.

Now repeat the process. As you can see in the following picture, this

can work quite well in "nice" cases.

It is possible for things to go wrong. In the next picture, the process seems to be bouncing around aimlessly without getting closer to the root on the left.

In fact, if one of the bounces winds up right below the critical point, the horizontal tangent will shoot you off to infinity!

Here is another situation in which Newton's method fails. This time there's an oscillation of period 2.

When Newton's method does work, it works well: Roughly, the number of places of accuracy doubles with each step. However, things can also go wrong even more spectacularly than in the bad cases above. Later on, I'll discuss a spectacular result due to Barna (1956, 1961) which illustrates this.

The formula for Newton's method is easy. If your current guess is

![]() , you want to know where the tangent at

, you want to know where the tangent at ![]() hits the x-axis. The tangent line has slope

hits the x-axis. The tangent line has slope ![]() , and passes through the point

, and passes through the point ![]() . Its

equation is

. Its

equation is

![]()

Set ![]() to find the x-intercept. If I call the x-intercept

to find the x-intercept. If I call the x-intercept

![]() , then

, then

![]()

This is the formula for Newton's method. Note that if you luck out

and hit a root, ![]() and

and ![]() --- the procedure stabilizes.

--- the procedure stabilizes.

Example. Suppose ![]() and

and ![]() . What is the next value of x if Newton's method is

applied to f starting at

. What is the next value of x if Newton's method is

applied to f starting at ![]() ?

?

The next value of x is

![]()

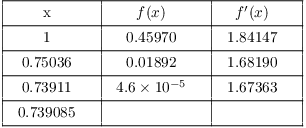

Example. Use Newton's method to approximate a

solution to ![]() .

.

A graph shows that ![]() is a reasonable starting point. I have

is a reasonable starting point. I have ![]() , so

, so

![]()

I can iterate the Newton function:

![]()

This might be appropriate if you're using a program.

I'll do things step-by-step so you can see the values of x, ![]() ,

and

,

and ![]() :

:

The last result is good to 6 places.

It turns out that for the equation ![]() , there is an easy way to

find the solution iteratively on your calculator. Take the starting

number --- say

, there is an easy way to

find the solution iteratively on your calculator. Take the starting

number --- say ![]() --- and repeatedly take the cosine. On some

calculators, you need to take the cosine of the last result

explicitly; on others, you can just keep pushing the cosine button.

The process will converge --- more slowly than Newton's method --- to

the solution above.

--- and repeatedly take the cosine. On some

calculators, you need to take the cosine of the last result

explicitly; on others, you can just keep pushing the cosine button.

The process will converge --- more slowly than Newton's method --- to

the solution above.![]()

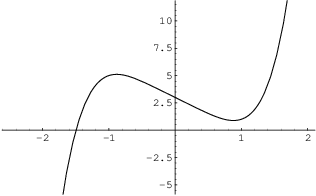

Example. Solve ![]() .

.

Note that there is no "general quintic formula", so without using very complicated mathematical functions, I must approximate a root.

First, I graph ![]() .

.

There appears to be a single root around ![]() .

.

The derivative is ![]() . I can iterate the Newton

function

. I can iterate the Newton

function

![]()

This might be appropriate if you're using a program.

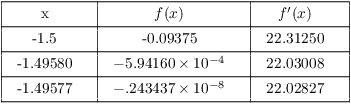

I'll set up a table and compute the values step-by-step. Here is the table:

![]() is correct to 5 places.

is correct to 5 places.

Notice that Newton's method converges very rapidly (when it works).

As I noted earlier, the number of correct places will roughly double

with each iteration.![]()

Example. ( A square root

algorithm) Use Newton's method to approximate ![]() .

.

The positive solution to ![]() is

is ![]() , so I'll apply

Newton's method to

, so I'll apply

Newton's method to ![]() . I have

. I have ![]() , so

, so

![]()

If you iterate this function with a good starting point, you

approximate ![]() . For example, starting at

. For example, starting at ![]() ,

the first few iterates are

,

the first few iterates are

![]()

The last one is already good to 5 places.

In general, for ![]() ,

, ![]() can be approximated by iterating

can be approximated by iterating

![]()

This algorithm is often used in square root routines for

computers.![]()

There is a spectacular result due to Barna ([1], [2]) which demonstrates how badly Newton's method can fail. I'll state a special case.

Take a polynomial ![]() of degree

of degree ![]() . In fact, take one of degree

28, with 28 distinct roots. For example, you could use

. In fact, take one of degree

28, with 28 distinct roots. For example, you could use

![]()

Choose f so that:

(a) Between two roots of ![]() there is a root of

there is a root of ![]() .

.

(b) Between two roots of ![]() there is a root of

there is a root of ![]() .

.

(c) All the roots of ![]() are inside the interval determined by the

biggest and smallest roots of f.

are inside the interval determined by the

biggest and smallest roots of f.

(d) All the roots of ![]() are inside the interval determined by the

biggest and smallest roots of

are inside the interval determined by the

biggest and smallest roots of ![]() .

.

These conditions simply say that f is a rather generic polynomial;

they rule out things such as multiple roots for ![]() and

and

![]() .

.

For ![]() , you can see that f has 28

roots, and

, you can see that f has 28

roots, and ![]() has 27. I've labelled the roots of

has 27. I've labelled the roots of ![]() as

as

![]() ,

, ![]() , .... Here's a schematic picture of the

intervals:

, .... Here's a schematic picture of the

intervals:

![]()

I've also labelled the intervals between the r's with the letters A, B, C, ....

When you do Newton's method, if you hit a root r of ![]() ,

the tangent is horizontal and you get shot out to infinity.

Otherwise, you bounce around between the intervals labelled with

letters.

,

the tangent is horizontal and you get shot out to infinity.

Otherwise, you bounce around between the intervals labelled with

letters.

Barna's result says that if you take any string of letters, there is a starting point for Newton's method which "spells out" that string. That is, the successive iterates land in the intervals labelled by the letters for the string.

So if you took the string "STROMBOLI", the first iterate would be in the S interval, the second in the T interval, the third in the R interval, and so on.

You could take a string which spelled out an encyclopedia --- or a history of your life! --- and Barna's result says there's a starting point such that Newton's method would spell the string.

As you might expect, there's a catch. The catch is that while the result guarantees that such a starting point exists, it doesn't give any way of finding it. Maybe that's a good thing!

Copyright 2018 by Bruce Ikenaga